met een 5*4TB in RAIDZ2.

1 4TB schijf offline gebracht en vervangen met een 6TB schijf en replace gestart.

De overige 4 4TB schijven zijn via LUKS geëncrypteerd, de nieuwe niet (op termijn wil ik de andere 4TBs allemaal vervangen voor non LUKS 6TB, dit is de eerste)

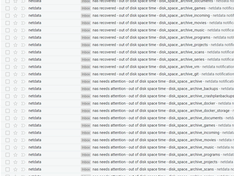

De replace gestart rond de middag ergens maar, de resilver blijkt telkens opnieuw te starten:

code:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

| root@pve:~# zpool status bds

pool: bds

state: DEGRADED

status: One or more devices is currently being resilvered. The pool will

continue to function, possibly in a degraded state.

action: Wait for the resilver to complete.

scan: resilver in progress since Tue Dec 25 00:26:24 2018

53,5M scanned out of 12,6T at 5,35M/s, 687h31m to go

8,60M resilvered, 0,00% done

config:

NAME STATE READ WRITE CKSUM

bds DEGRADED 0 0 0

raidz2-0 DEGRADED 0 0 0

dm-name-disk1.1 ONLINE 0 0 0

disk2.1 ONLINE 0 0 0

dm-name-disk3.1 ONLINE 0 0 0

replacing-3 DEGRADED 0 0 0

dm-name-disk4.1 OFFLINE 0 0 0

ata-WDC_WD60EFRX-68L0BN1_WD-WX61D48DL8AH ONLINE 0 0 0 (resilvering)

dm-name-disk5.1 ONLINE 0 0 0

errors: No known data errors

root@pve:~# zpool status bds

pool: bds

state: DEGRADED

status: One or more devices is currently being resilvered. The pool will

continue to function, possibly in a degraded state.

action: Wait for the resilver to complete.

scan: resilver in progress since Tue Dec 25 00:27:11 2018

9,25M scanned out of 12,6T at 9,25M/s, 397h37m to go

0B resilvered, 0,00% done

config:

NAME STATE READ WRITE CKSUM

bds DEGRADED 0 0 0

raidz2-0 DEGRADED 0 0 0

dm-name-disk1.1 ONLINE 0 0 0

disk2.1 ONLINE 0 0 0

dm-name-disk3.1 ONLINE 0 0 0

replacing-3 DEGRADED 0 0 0

dm-name-disk4.1 OFFLINE 0 0 0

ata-WDC_WD60EFRX-68L0BN1_WD-WX61D48DL8AH ONLINE 0 0 0

dm-name-disk5.1 ONLINE 0 0 0

errors: No known data errors

root@pve:~# zpool status bds

pool: bds

state: DEGRADED

status: One or more devices is currently being resilvered. The pool will

continue to function, possibly in a degraded state.

action: Wait for the resilver to complete.

scan: resilver in progress since Tue Dec 25 00:32:58 2018

36,5M scanned out of 12,6T at 7,29M/s, 504h12m to go

2,86M resilvered, 0,00% done

config:

NAME STATE READ WRITE CKSUM

bds DEGRADED 0 0 0

raidz2-0 DEGRADED 0 0 0

dm-name-disk1.1 ONLINE 0 0 0

disk2.1 ONLINE 0 0 0

dm-name-disk3.1 ONLINE 0 0 0

replacing-3 DEGRADED 0 0 0

dm-name-disk4.1 OFFLINE 0 0 0

ata-WDC_WD60EFRX-68L0BN1_WD-WX61D48DL8AH ONLINE 0 0 0 (resilvering)

dm-name-disk5.1 ONLINE 0 0 0

errors: No known data errors |

De 4TB's zijn allemaal SMART ok (testen worden frequent uitgevoerd) en dit is de SMART van de nieuwe 6TB schijf (nog geen effectieve SMART test uitgevoerd):

code:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

| root@pve:~# smartctl -a /dev/disk/by-id/ata-WDC_WD60EFRX-68L0BN1_WD-WX61D48DL8AH

smartctl 6.6 2016-05-31 r4324 [x86_64-linux-4.15.18-9-pve] (local build)

Copyright (C) 2002-16, Bruce Allen, Christian Franke, www.smartmontools.org

=== START OF INFORMATION SECTION ===

Model Family: Western Digital Red

Device Model: WDC WD60EFRX-68L0BN1

Serial Number: WD-WX61D48DL8AH

LU WWN Device Id: 5 0014ee 2657b382b

Firmware Version: 82.00A82

User Capacity: 6.001.175.126.016 bytes [6,00 TB]

Sector Sizes: 512 bytes logical, 4096 bytes physical

Rotation Rate: 5700 rpm

Device is: In smartctl database [for details use: -P show]

ATA Version is: ACS-2, ACS-3 T13/2161-D revision 3b

SATA Version is: SATA 3.1, 6.0 Gb/s (current: 6.0 Gb/s)

Local Time is: Tue Dec 25 00:34:34 2018 CET

SMART support is: Available - device has SMART capability.

SMART support is: Enabled

=== START OF READ SMART DATA SECTION ===

SMART overall-health self-assessment test result: PASSED

General SMART Values:

Offline data collection status: (0x00) Offline data collection activity

was never started.

Auto Offline Data Collection: Disabled.

Self-test execution status: ( 0) The previous self-test routine completed

without error or no self-test has ever

been run.

Total time to complete Offline

data collection: ( 1904) seconds.

Offline data collection

capabilities: (0x7b) SMART execute Offline immediate.

Auto Offline data collection on/off support.

Suspend Offline collection upon new

command.

Offline surface scan supported.

Self-test supported.

Conveyance Self-test supported.

Selective Self-test supported.

SMART capabilities: (0x0003) Saves SMART data before entering

power-saving mode.

Supports SMART auto save timer.

Error logging capability: (0x01) Error logging supported.

General Purpose Logging supported.

Short self-test routine

recommended polling time: ( 2) minutes.

Extended self-test routine

recommended polling time: ( 673) minutes.

Conveyance self-test routine

recommended polling time: ( 5) minutes.

SCT capabilities: (0x303d) SCT Status supported.

SCT Error Recovery Control supported.

SCT Feature Control supported.

SCT Data Table supported.

SMART Attributes Data Structure revision number: 16

Vendor Specific SMART Attributes with Thresholds:

ID# ATTRIBUTE_NAME FLAG VALUE WORST THRESH TYPE UPDATED WHEN_FAILED RAW_VALUE

1 Raw_Read_Error_Rate 0x002f 100 253 051 Pre-fail Always - 0

3 Spin_Up_Time 0x0027 100 253 021 Pre-fail Always - 0

4 Start_Stop_Count 0x0032 100 100 000 Old_age Always - 1

5 Reallocated_Sector_Ct 0x0033 200 200 140 Pre-fail Always - 0

7 Seek_Error_Rate 0x002e 200 200 000 Old_age Always - 0

9 Power_On_Hours 0x0032 100 100 000 Old_age Always - 9

10 Spin_Retry_Count 0x0032 100 253 000 Old_age Always - 0

11 Calibration_Retry_Count 0x0032 100 253 000 Old_age Always - 0

12 Power_Cycle_Count 0x0032 100 100 000 Old_age Always - 1

192 Power-Off_Retract_Count 0x0032 200 200 000 Old_age Always - 0

193 Load_Cycle_Count 0x0032 200 200 000 Old_age Always - 7

194 Temperature_Celsius 0x0022 121 121 000 Old_age Always - 31

196 Reallocated_Event_Count 0x0032 200 200 000 Old_age Always - 0

197 Current_Pending_Sector 0x0032 200 200 000 Old_age Always - 0

198 Offline_Uncorrectable 0x0030 100 253 000 Old_age Offline - 0

199 UDMA_CRC_Error_Count 0x0032 200 200 000 Old_age Always - 0

200 Multi_Zone_Error_Rate 0x0008 100 253 000 Old_age Offline - 0

SMART Error Log Version: 1

No Errors Logged

SMART Self-test log structure revision number 1

No self-tests have been logged. [To run self-tests, use: smartctl -t]

SMART Selective self-test log data structure revision number 1

SPAN MIN_LBA MAX_LBA CURRENT_TEST_STATUS

1 0 0 Not_testing

2 0 0 Not_testing

3 0 0 Not_testing

4 0 0 Not_testing

5 0 0 Not_testing

Selective self-test flags (0x0):

After scanning selected spans, do NOT read-scan remainder of disk.

If Selective self-test is pending on power-up, resume after 0 minute delay. |

Dit zijn de andere schijven:

code:

1

2

3

4

5

6

7

| lrwxrwxrwx 1 root root 9 dec 24 14:51 /dev/disk/by-id/ata-ST4000VN000-1H4168_Z304NVRN -> ../../sda

lrwxrwxrwx 1 root root 9 dec 24 14:51 /dev/disk/by-id/ata-ST4000VN000-1H4168_Z306C70G -> ../../sdc

lrwxrwxrwx 1 root root 9 dec 24 14:51 /dev/disk/by-id/ata-WDC_WD40EFRX-68WT0N0_WD-WCC4E4LRJ6JP -> ../../sde

lrwxrwxrwx 1 root root 9 dec 24 14:51 /dev/disk/by-id/ata-WDC_WD40EFRX-68WT0N0_WD-WCC4E4LRJ7SF -> ../../sdb

lrwxrwxrwx 1 root root 9 dec 25 00:36 /dev/disk/by-id/ata-WDC_WD60EFRX-68L0BN1_WD-WX61D48DL8AH -> ../../sdd

lrwxrwxrwx 1 root root 10 dec 25 00:36 /dev/disk/by-id/ata-WDC_WD60EFRX-68L0BN1_WD-WX61D48DL8AH-part1 -> ../../sdd1

lrwxrwxrwx 1 root root 10 dec 25 00:36 /dev/disk/by-id/ata-WDC_WD60EFRX-68L0BN1_WD-WX61D48DL8AH-part9 -> ../../sdd9 |

/dev/mapper

code:

1

2

3

4

| lrwxrwxrwx 1 root root 7 dec 24 14:51 disk1.1 -> ../dm-2

lrwxrwxrwx 1 root root 7 dec 24 14:51 disk2.1 -> ../dm-3

lrwxrwxrwx 1 root root 7 dec 24 14:51 disk3.1 -> ../dm-4

lrwxrwxrwx 1 root root 7 dec 24 14:51 disk5.1 -> ../dm-5 |

Ik heb met dit systeem al verschillende keren een 4TB schijf vervangen zonder problemen. Ik vermoed dat de nieuwe 6TB schijf niet helemaal ok is?

Heeft hier iemand al ervaring mee? Zou ik een SMART test starten op de nieuwe schijf tijdens een replace?

Zou herstarten helpen?

Ik ben benieuwd wat de guru's als tip hebben.

edit:

Ik heb de nieuwe schijf zpool detached en alles blijkt weer vlot te werken. De scrub loopt nu ook netjes:

code:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

| root@pve:~/utils/scripts# zpool status bds

pool: bds

state: DEGRADED

status: One or more devices has been taken offline by the administrator.

Sufficient replicas exist for the pool to continue functioning in a

degraded state.

action: Online the device using 'zpool online' or replace the device with

'zpool replace'.

scan: scrub in progress since Tue Dec 25 01:19:42 2018

20,4G scanned out of 12,6T at 82,7M/s, 44h23m to go

0B repaired, 0,16% done

config:

NAME STATE READ WRITE CKSUM

bds DEGRADED 0 0 0

raidz2-0 DEGRADED 0 0 0

dm-name-disk1.1 ONLINE 0 0 0

disk2.1 ONLINE 0 0 0

dm-name-disk3.1 ONLINE 0 0 0

dm-name-disk4.1 OFFLINE 0 0 0

dm-name-disk5.1 ONLINE 0 0 0

errors: No known data errors |

SMART test op de nieuwe schijf is gestart

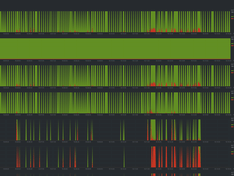

Edit2: long smart zonder fouten, replace en weer hetzelfde fenomeen. Resilver herstart steeds en ZFS performantie zakt volledig in elkaar (andere Pools krijgen ook timeouts) tot ik de 6TB detach....

Ik probeer morgen eens met een andere 6TB schijf. Moeilijk te begrijpen...

Edit3: net met de 6TB schijf een aparte pool (test) aangemaakt en data van de 1ne naar de andere pool kopiëren gaat perfect tegen 130MB/s plus een scrub op de test pool loopt wel vlot. Dan begrijp ik al helemaal niet waarom een replace niet werkt. Met deze test sluit ik dan toch al slechte bekabeling uit en lijkt de disk toch performant genoeg te zijn.

ZFS heeft toch geen weet van de onderliggende LUKS encryptie? Het enige verschil tov de andere disks in de main pool BDS is dat de nieuwe 6TB niet LUKS encryted is...

Dus effe samenvatting:

Nieuwe 6TB schijf SMART long test ok

getest met aanmaken van "single disk pool".

Data kopiëren naar de 6TB schijf tegen 130MB/s

Scrub op de test pool tegen verwachte snelheid.

Maar diezelfde schijf toegevoegen aan bestaande RaidZ2 pool laat het resilveren steeds herstarten en Krijgen we timeouts op alles wat met ZFS te maken heeft.

Ik vrees dat een andere schijf weinig verschil gaat uitmaken.

To test:

- eens LUKS encrypten zoals de rest in BDS pool

- andere schijf

-...??

Final edit:

whaaaat, disk LUKS encrypted en resilver gaat zoals het hoort:

code:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

| root@pve:~/utils/disks# zpool status bds

pool: bds

state: DEGRADED

status: One or more devices is currently being resilvered. The pool will

continue to function, possibly in a degraded state.

action: Wait for the resilver to complete.

scan: resilver in progress since Wed Dec 26 10:19:11 2018

209G scanned out of 12,6T at 211M/s, 17h7m to go

41,3G resilvered, 1,62% done

config:

NAME STATE READ WRITE CKSUM

bds DEGRADED 0 0 0

raidz2-0 DEGRADED 0 0 0

dm-name-disk1.1 ONLINE 0 0 0

disk2.1 ONLINE 0 0 0

dm-name-disk3.1 ONLINE 0 0 0

replacing-3 DEGRADED 0 0 0

dm-name-disk4.1 OFFLINE 0 0 0

disk4.2 ONLINE 0 0 0 (resilvering)

dm-name-disk5.1 ONLINE 0 0 0

errors: No known data errors |

Hoe valt dat te verklaren

Een LUKS encrypted pool kan ik dus niet omzetten naar non luks encrypted disks

[

Voor 226% gewijzigd door

A1AD op 26-12-2018 10:36

]

:fill(white):strip_exif()/i/2003943496.jpeg?f=thumbmini)

:strip_exif()/i/1321263566.png?f=thumbmini)

/u/19715/crop5c2141d5e6f68.png?f=community)

:strip_exif()/u/396800/D-_3427da0a9c293b63f2a66dea2e642102.gif?f=community)

:strip_icc():strip_exif()/u/75828/sid.jpg?f=community)

:strip_exif()/u/70909/BlueKachina_60x60.gif?f=community)

:strip_exif()/u/53522/crop583d477015a24.gif?f=community)

/u/200133/artemis_square_e_60-60.png?f=community)

/u/203002/crop5965f53a012f5_cropped.png?f=community)

/u/170728/owl.png?f=community)

:strip_icc():strip_exif()/u/170724/i.jpg?f=community)

/u/1176/crop635f8931b2b68_cropped.png?f=community)

:strip_icc():strip_exif()/u/92582/crop5daf1326d09c1.jpeg?f=community)

/u/38159/DirkJan.png?f=community)

/u/96077/crop5b8d7c89bae9d_cropped.png?f=community)

:strip_icc():strip_exif()/u/200296/arch-dsotm_small.jpg?f=community)

:strip_exif()/u/340453/crop67a669ce4f1f4_cropped.webp?f=community)

:strip_icc():strip_exif()/u/36378/crop5a3f931ecfec0_cropped.jpeg?f=community)